Workplace Communications Clearly Improved When Digital Signage Deployed: New Research

January 25, 2024 by Dave Haynes

Google’s Chrome OS team and UK-based CMS software firm ScreenCloud have jointly released findings from extensive survey research about using digital signage for workplace …

Read moreISE 2024 Sixteen:Nine Mixer Sponsor Profile: How ScreenCloud Delivers Automated, Web-Driven Digital Signage Experiences

January 17, 2024 by Dave Haynes

Integrated Systems Europe is now less than two weeks out, and Sixteen:Nine is again doing a Barcelona version of the networking mixers it has been …

Read moreScreenCloud Drives On-Stage Messaging At Lollapalooza

August 7, 2023 by Dave Haynes

Digital signage tech shows up in all kinds of unexpected places, and the stage at an iconic music festival certainly qualifies as unexpected. Mark …

Read moreDigital Signage Summit Europe Impressions: Day 2

July 6, 2023 by Dave Haynes

Digital Signage Summit Europe wrapped up today in Munich and I can very honestly say I thought it was great. Very well run. Great …

Read moreISE Sixteen:Nine Mixer Sponsor Profiles: ScreenCloud

January 23, 2023 by Dave Haynes

Integrated Systems Europe is fully back after COVID-era disruptions, and Sixteen:Nine is doing a Barcelona version of the networking mixer it has been doing …

Read moreScreenCloud Launches Own Optimized OS For Digital Signage, And Dedicated Playback Device

November 3, 2022 by Dave Haynes

UK-based CMS software company ScreenCloud has taken the interesting step of building its own variant of the Linux operating system – dubbed ScreenCloud OS – …

Read moreExperiential Design Firm Uses Headless CMS For Display Project; “Considerably Easier” Than Traditional Digital Signage Software

August 19, 2022 by Dave Haynes

At least a couple of digital signage CMS firms have gone down the path of offering what they term a headless CMS – the …

Read moreScreenCloud Announces New Purpose-Built Operating System And Sub-$200 Digital Signage Playout Device

October 4, 2021 by Dave Haynes

The UK-based digital signage CMS firm ScreenCloud has taken the interesting step of putting two years of R&D time and money into developing a …

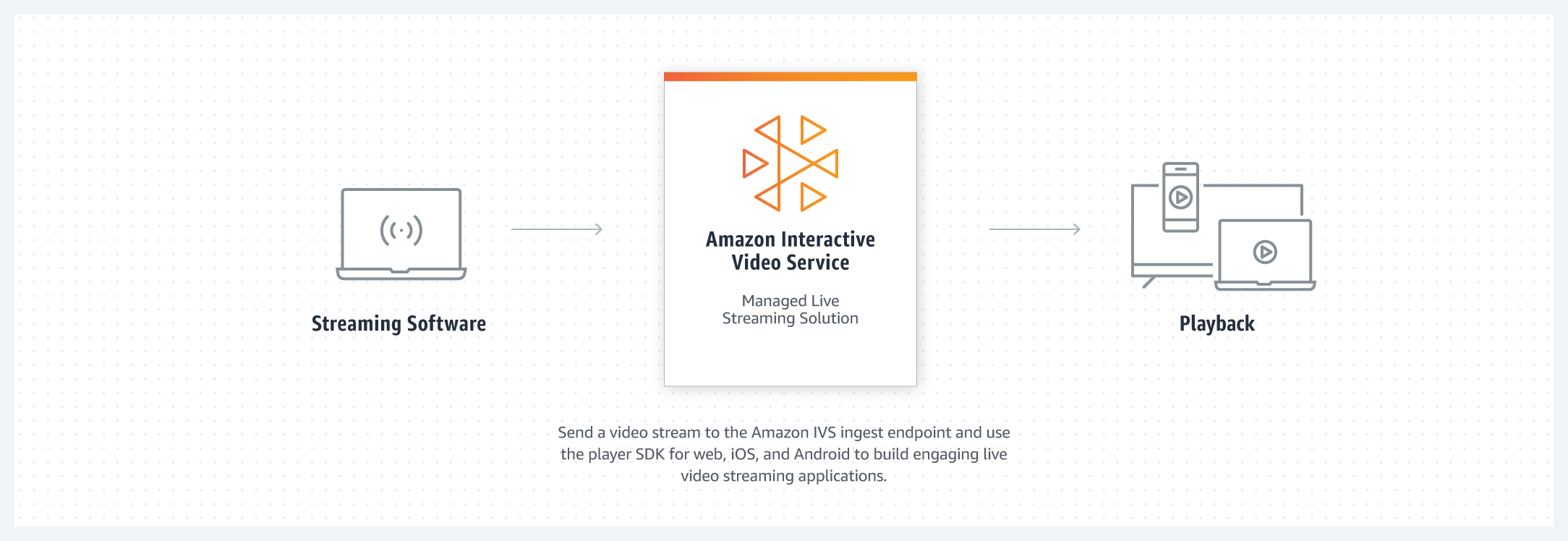

Read moreAmazon Starts Marketing Its Own Branded Line Of “Smart TVs” With Fire TV Built-In

September 10, 2021 by Dave Haynes

Acknowledging the many, oft-discussed reasons why people putting in digital signage networks DON”T want to use TVs and DO want to use commercial displays, …

Read more