Sharp NEC’s New NaviSense AI Platform More About Actionable Insights Than Instant Content Triggers

June 30, 2022 by Dave Haynes

When I was at InfoComm earlier this month, I had a quick walk-thru and chat at the Sharp NEC booth and asked, among a few things, about a new computer vision platform called NaviSense that was being demonstrated.

Was this, I asked, an evolved version of the ALP platform developed and marketed by NEC just a few years ago by the company, BEFORE the joint venture/merger of the two Japanese tech firms?

Pretty much, I was told. But I have since learned it’s actually quite different and has no particularly tangible ties or code from the mothballed ALP platform, other than lessons learned.

The bullet points on this new iteration of computer vision, built from scratch in the U.S. in the last couple of years, are:

- lower costs, in part by being able to run the edge computing on low-cost Raspberry Pi 4s and use inexpensive, consumer-grade 720P cameras to capture video streams and do the pattern detection work;

- on-premise software instead of just cloud-hosted by the display company;

- API access that ALP didn’t have;

- expanded focus beyond retail and more emphasis on revealing what’s going on in an environment, as opposed to serving 1:1 ads based on the category of viewer, moment by moment.

The product has a partner – Guise AI – which seems to be some sort of edge-optimization tech for AI applications like computer vision. “Our technology deployed at the edge delivers feedback rapidly and locally within the system,” says the PR. “Localized processing is more efficient (low latency) and it increases the level of security in terms of data privacy. Clients and OEMS who require an accurate, cost-efficient, and secure solution turn to Guise AI to continuously extract patterns and predict from real-time and dynamically changing data to create the greatest impact for end-users.”

I wrote a bit about this earlier, but got a deeper briefing and clarification this week from Kelly Harlin, who was prime for ALP when it was launched by NEC, and is now with the blended company, leading the NaviSense product.

The key messages Sharp NEC is driving are:

- Cost-effective, easy-to-use, edge computing, computer vision solution that gathers key, environmental data points, to drive business insights;

- Deploy-ready on a variety of computing devices from RPi4 to Intel SDM;

- Originally developed to deploy on AndroidOS, but can deploy on a customer’s existing Windows-based devices;

- Uses existing camera equipment and data dashboards, and exports data with open REST API and with JSON standard data format;

- Customizable data sets as customer needs evolve;

- Data that can be exported to a database of choice, locally or on the customer’s cloud.

What was interesting to me is how Harlin says this is and will be used. A lot of marketing energy around computer vision applications for digital signage has gone into the simplistic idea that messaging can be served based on the profile of who is in front of a screen at a given moment. I’ve never been all that enthused about that, because viewer time is very fleeting (a matter of seconds), viewers change and multiple people might be looking at once, and all the machine learning in the world isn’t necessarily going to nail what gets the interest and response for a demographic type. Not all 64-year-old men, like me, have similar consumer interests and wants.

“The problem with that is that was almost a one-to-one content triggering situation,” says Harlin. “We had that kind of feedback from customers. There are a lot of other great platforms out there that can trigger, but they’re still trying to figure out what’s the value of tuning messages to one person.”

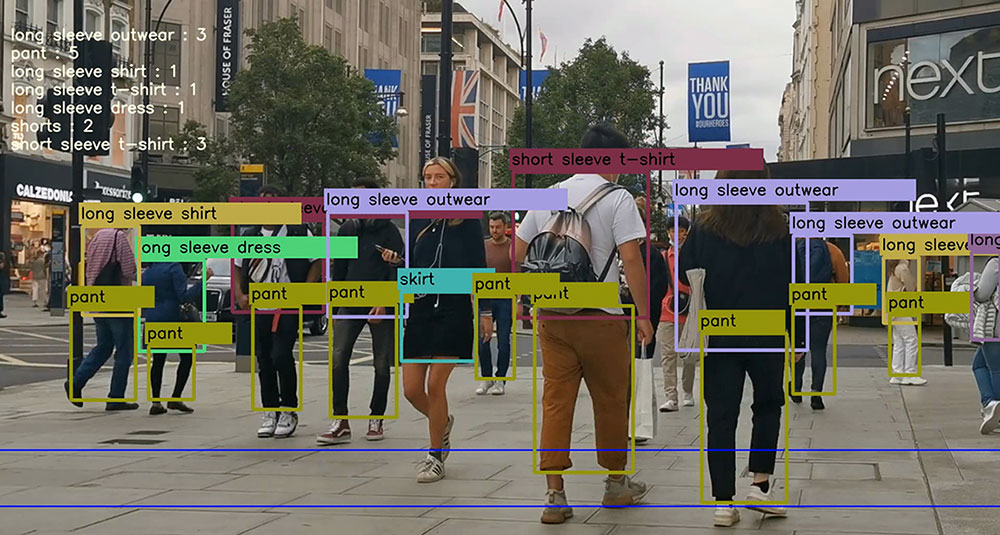

Instead of making that a focus, a lot of what NaviSense is encouraging and supporting is computer vision applications that provide insights into how a place works and how people use it and move around it. Pattern detection can do things like count, for a sporting goods retailer, the number of Nike logos that come through a store, versus people with Puma, Adidas or Lululemon.

Harlin showed me video from an airport ramp that was capturing and logging the frenetic activity surrounding unloading luggage from a commercial jet, logging time and flagging things like safety violations.

The AI can learn patterns, learn colors and other variables and start to generate data for dashboards that give venue operators or solutions providers or brands a better sense of whatever needs to be understood – even things like remove uniformed employees out of head counts and traffic flow analyses by looking for logos and colors of those uniforms. It could tell a big box store the shopping cart corrals are almost empty, and signal a need to round more carts up before there’s a shopper revolt.

It could also do things like conditional messaging – like looking at TSA screening lines at an airport, telling operations on a dashboard that the wait times are escalating, causing a new line to open up, and changing the messaging on screens to reflect revised estimated wait times and advise if a new screening checkpoint opened up (which would start to load-balance the airport screening lines).

I’ve thought a lot of the marketing activity involving computer vision/video analytics/pattern detection tech has been flawed through the years, because it has been wrongly labeled as facial recognition (causing unnecessary privacy concerns) and because far too much emphasis has been placed on how it might help sell more stuff. I was encouraged, at a software company’s InfoComm stand, to watch how on-screen messaging was changed by who was in front of the screen. Like it was magic. I politely told my vendor friend I saw Intel do that 10-plus years ago, and it wasn’t all that interesting even back then.

But AI that gather insights and tell operators how things work, where people go, where there are crowds and where there are dead spots, and any other characteristics that are built around looking for and counting patterns, that’s interesting.

Borrowing on that Nike logo thing, a big sporting goods retailer might have a lot of interest in knowing, beyond simple observation and POS data, the brands preferences of shoppers. By counting logos on hats and chests and shoes and whatever, a retail chain could give (or resell) athletic wear brands insights on whether stores in a city or region are Nike-dominant stores and perhaps where more people are coming in with Under Armour gear on – and then market/respond accordingly.

It’s not an overly new idea, but arguably a better way to go. Miami’s AdMobilize, which does similar work, about five years ago launched a Vehicle Recognition Engine that used computer vision to count and categorize approaching cars on roadways. That was presented as a tool for OOH and DOOH advertisers who wanted to target ad efforts, let’s say, to roadways that see a lot of non-commercial trucks, or roadways with a lot of smaller SUVs and minivans.

Leave a comment