How HDR Is Transforming Digital Displays

May 23, 2019 by guest author, sixteenninewpadmin

Guest Post: Aaron Cheesman , D3 Dynamic Digital Displays

Aaron Cheesman

The video industry has gone through monumental changes over the years. However, for a long time, the focus was on producing thinner screens and higher resolutions.

On the latter front, we’ve reached a point of diminishing returns where, unless you’re sitting particularly close, your eyes may not even be able to tell the difference between last year’s ultra-high-definition display and the latest ultra-ultra-high-definition display.

Today, the biggest leap forward in image quality is not about achieving higher resolution. It’s HDR—or High Dynamic Range – which applies advances in brightness, contrast and color depth to create a far more true-to-life experience.

More Bits, More Colors, More Contrast

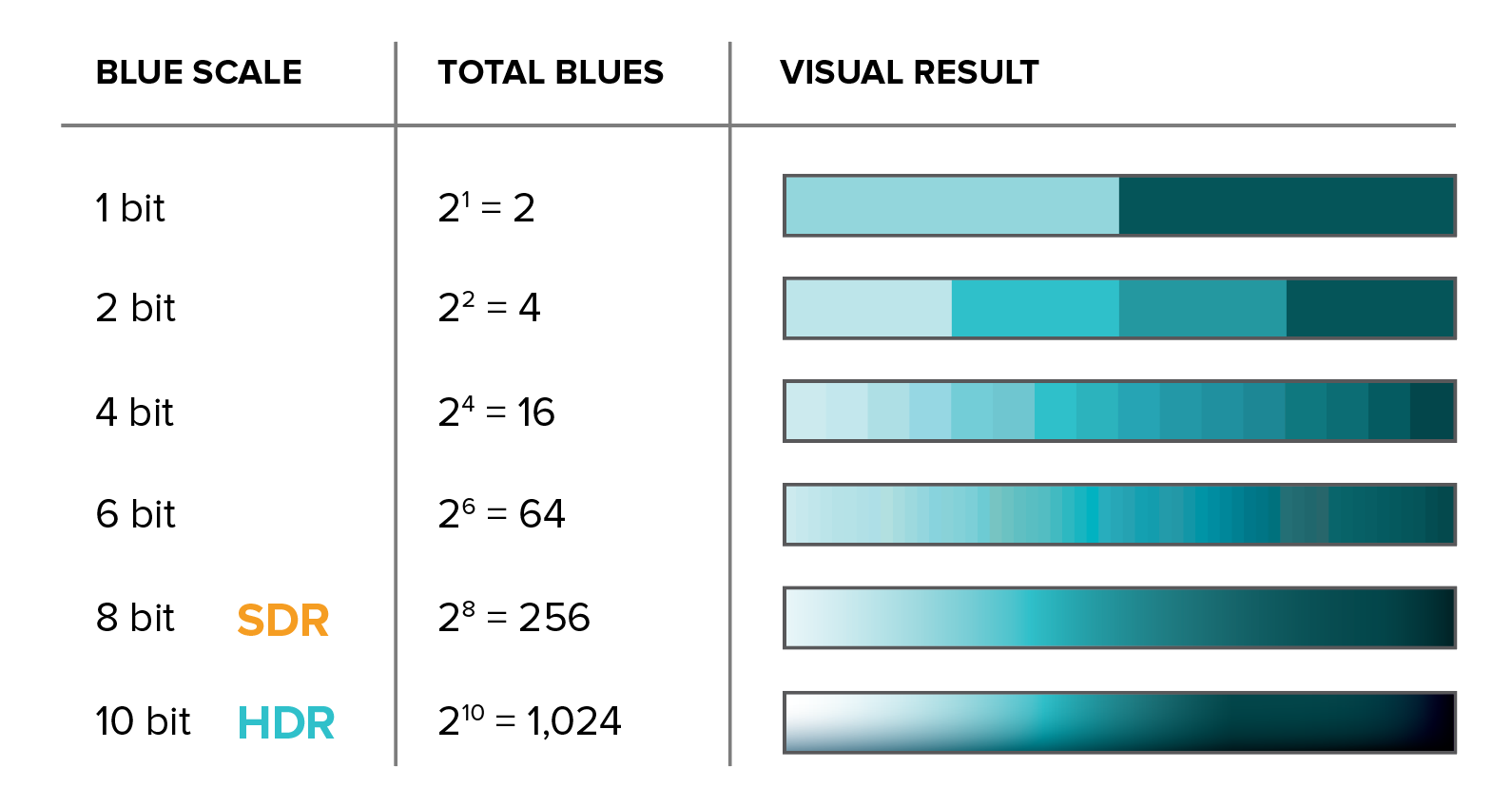

A good example of how color depth affects viewing experience is found in the phenomenon of “banding.” Consider a basic display with a gradient on screen. If you can see bands where the color steps from one value to the next, that means the display can’t produce enough variations in between. Banding is to limited color depth as pixelation is to limited resolution.

Standard Dynamic Range (the current industry standard) uses 8-bit color. You can make a lot of colors with 256 reds, 256 blues and 256 greens. HDR protocols require at least 10-bit color. That may not sound like a huge leap to the layperson, but it means that we’re now working with 1,024 reds x 1,024 blues x 1,024 greens. We’ve gone from 16 million distinct colors to over a billion.

Brighter Brights and Darker Darks

The human eye is capable of registering a vast range of luminance, from the dimmest star to blinding daylight. HDR takes advantage of major improvements in modern display capabilities.

Standard Dynamic Range was based on the limitations of cathode ray tubes, whose darkest tones were nowhere near true black and whose brightest displays were still rather dim. High Dynamic Range captures and displays a much wider range of brightnesses as well as more subtle gradations between shades.

The HDR Ecosystem

There’s much more to HDR than just a great screen. True HDR requires a whole series of components working together to capture, process and render images containing far more information than before. That means you have to begin by creating native HDR content. (HDR photography has been around for some time, but HDR video cameras, which use an entirely different capture process, are still a bit exotic.)

Once the content has been developed, you need HDR gear at every step – Playback, Interface, Video Processor, Driver, and Display – to render it. If any element in that series is not HDR-compatible, you will not see a true HDR result.

While many LED companies are chasing individual links in the HDR chain, very few are working on an end-to-end solution. My company is one of the exceptions, as D3 engineered an entire HDR package that includes everything but the camera.

HDR is clearly the way of the future. However, some specifics have yet to take shape. As of this writing, there are several competing HDR standards, with names such as HDR10, Dolby Vision, Advanced HDR, Hybrid Log Gamma and HDR10+.

Some are open format, some are proprietary. Some favor streaming, others broadcasting. As with previous format wars, it is the content creators who will likely have the final say on what protocols become international standards. Watch this all closely.

Want to learn more? D3 Dynamic Digital Displays, where I work, put out a free, highly readable white paper on the subject.

Great article, Aaron. Very true that one needs and end-to-end solution. Our BrightSign Series 4 players are a great choice in this respect.

https://www.brightsign.biz/key-features/hdr10

https://www.brightsign.biz/key-features/dolby-vision