Kinect Tweaked To Turn Any Surface Into Touchscreen

May 28, 2012 by Dave Haynes

The UK version of Wired has a piece up about a German startup that is using Kinect to turn any surface into a touchscreen – and by that I mean surfaces like walls and whiteboards.

Munich-based Ubi Interactive uses the motion-tracking and depth-perception cameras in the Kinect to detect where a person is pointing, swiping and pinching to emulate the behavior of a big-ass touchscreen or interactive whiteboard.

Kinect, as you likely know, has until now been used for gestures based on distance, ie wave a hand from five feet away and make something happen on a screen.

Video Here: http://www.wired.co.uk/news/archive/2012-05/25/ubi-interactive

The company is working directly with Microsoft and did a demo recently at a Microsoft lab in Seattle. The people who saw it were impressed.

A conventional boardroom projector lit up a pane of frosted glass that was suspended in the centre of a low-lit office. On the other side of the pane was a Kinect sensor, which was capturing the movements and gestures of our hands in front of the glass and sending the data to Ubi’s software, running on the same Windows PC that was sending the live image to a projector.

Responsiveness was excellent, with only a split second delay between performing a gesture and action happening on-screen. We played with a 3D model of Earth (as used on Microsoft’s Surface), using two hands to zoom in and out of the virtual planet, spin the globe around and locate ourselves in downtown Seattle. Naturally, this was followed by a successful test of Rovio’s Angry Birds.

Being able to play a PC version of Angry Birds highlights an important aspect of Ubi’s software-based system — it works with Windows’ built-in touchscreen support and works with any PC application.

“It’s all Windows touch-based gestures,” Chathoth explained. “We wanted to start with an experience everyone knows, but we can open up our API for 3D gestures. It knows exactly how far your fingertip is from the surface — when you actually touch it, that’s a click; when you’re not touching, it becomes a hovering motion.”

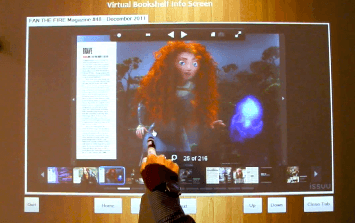

This depth-based action was also demonstrated. Hovering a finger over a strip of book covers projected onto a wall let you gesture left and right to browse titles from an ebook download store’s app. Actually tapping one of the covers selected that book for download.

Ubi is one of 11 startups that won $20,000 (£12,300) of funding and support from Microsoft as part of its Kinect Accelerator programme, but it remains independent as a startup. Its business model has several aspects: selling the software to individuals who already have a Kinect and a projector; selling a unit that bundles together a projector with a Kinect, as well as the software; retrofitting office spaces with Kinect sensors and software; working with advertisers and outdoor agencies who want to add interactivity to public displays and surfaces.

“We are already deploying some advertising displays,” Chathoth explained. “It’s attractive to a customer because of the cost, the ease of deployment; it’s portable — an 80-inch screen you can carry inside a laptop case — it’s flexible and it’s also an engaging experience.”

The software itself, Chathoth noted, would cost an individual “close to $500” (£320), and the company is keeping its options open for working with companies who want to integrate richer 3D applications that require custom gestures and software APIs.

He also highlighted the potential uses within educational establishments. “Kids can go and scratch on a wall, but nothing happens,” Chathoth said, highlighting that by using a regular wall instead of a costly smart whiteboard means there’s nothing a particularly aggressive pair of young hands could break.

This is intriguing in terms of the possibilities. I don’t see a lot of value in the Kinect-based, gesture-driven things I have seen at trade shows and in demo videos, but the idea of using projection to take a non-interactive surface like a blank wall, tabletop or museum exhibit has some possibilities in terms of reduce costs and the elements of surprise and sheer whimsy (I wasn’t expecting to interact with <blank>).

The company is part of a Kinect accelerator program, which means it has the support in dollars and guidance to actually bring something off a test bench and into the marketplace.

Leave a comment