DPAA Ad Metrics Guidelines … for Dummies

September 8, 2011 by Dave Haynes

I’d imagine there are two camps out there when it comes to the Digital Place-Based Advertising Association’s published guidelines for measuring the digital screen advertising industry.

There’s probably a small camp of research types who love this sort of thing, as well as people at a few of the companies that are members of the DPAA who were forced to spend enough time on it that some of the material actually sunk in.

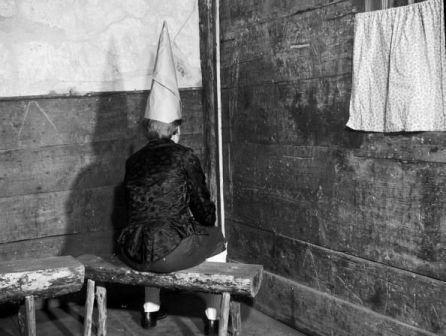

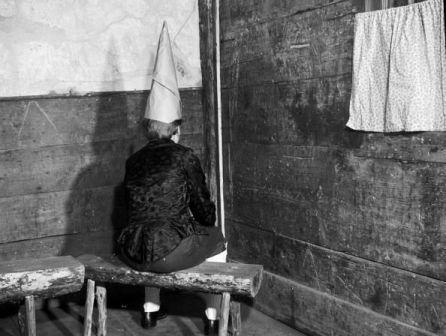

Then there’s the rest of us — the great unwashed of this industry — who probably took a couple of runs at reading the report when it was released with considerable hoo-hah, and uttered the only phrase really possible: “Huh?”

The guidelines first published when the DPAA was known as the Out-of-home Video Advertising Bureau are a well-considered, well-conceived, entirely laudable work. They were produced with the best intentions of starting to herd the industry cats and get the many, many, many network operators out there to start measuring and selling what they’ve got in some roughly harmonious fashion.

Without such guidelines, the people in media planning groups and at brands who control media spend will continue to look at the digital out of home industry as an interesting sideline that’s WAY too perplexing to understand, validate and buy.

Penetrating the impenetrable

But the guidelines, to be kind, are tough going. For the time-crunched or nominally interested, they are pretty much impenetrable.

Read the core statement of the thing: Average Unit Audience should be the currency metric for out-of-home video networks. Average Unit Audience is defined as the number and type of people exposed to the media vehicle with an opportunity to see a unit of time equal to the typical advertising unit.

A handful, and I do mean handful, of people will read that over and conclude, “Ok, yup. Good. Got it.”

The rest of us will more likely be bug-eyed and blinking, with bubbles forming on our lips after eight times through it.

For the research nerds, and ad sales operations types, the guidelines have a lot of good, deep thinking and plenty of rationalization and back-up on why the guidelines were shaped this way.

But the document is too big, too deep and too laden with new jargon to be absorbed and, more to the point, embraced and used. Much of this industry will succeed or fail based on the ability to win advertising and brand marketing dollars, and that means we should ALL know at least a little about the measurement standards that are being created and advised to give the industry credibility. My fear is most of us looked at the original OVAB guidelines, and decided we were going to need to set some time aside later to really read and absorb them. And because we’re all stinkin’ busy, that time hasn’t been set aside and many of us have moved on.

A guideline for dummies

So, for my own benefit, and sanity, I read the guidelines over (and over) and boiled it down to what I am called the DPAA (OVAB) Guidelines for Dummies.

I started down this path trying to break down the sections into easy-read, easy-understand versions, but abandoned that concept. There’s a lot in there, and for those truly interested, it’s not that hard to digest once you get over the hump of some tortured phrasing. My intention was to break down the core thinking into something more easily understood and remembered for those of us who just need to know the highlights.

The background to the guidelines is straightforward. There are countless digital screen networks out there now, and more coming. Just about all of them have their own way of measuring their viewing audience, and there is little in common between them. The venues, from corner stores to airports, buses to elevators, are too diverse to even imagine a single measurement system. But the thinking was that maybe a common set of measurement standards would lead to results that could be compared by the agency and brand people who would buy time on these kinds of networks.

“If each audience measurement study strives to produce the same set of metrics with an acceptable level of research quality,” says the guidelines, “and the data reporting is harmonized, the results can be safely compared for any number of typical media buying and planning analyses.”

The people who put the guidelines together recognized many of the companies in this space do not have pockets deep enough to fund really high quality measurement. The net result is a set of fairly elemental guidelines that might make media research uber-nerds roll their eyes. But these initial guidelines are a foundation, and that it will be in the interest of everyone to do deeper (read more expensive) research as the industry matures and research budgets grow.

The nut of it

With that stated, here’s the nut of it:

This is all about sorting out how many people had the opportunity to see an ad, therefore defining the network and venue’s advertising value. The key characteristics are presence, notice and dwell time.

The product of these guidelines is a number, that spits out at the end of a calculation, that sorts out the following:

• the foot traffic in a venue, like a medical clinic

• the foot traffic in the area(s) where the screens are up and running

• what percentage of that foot traffic actually looked at the screen

• dwell time in the vicinity of the screen

• length of the playlist loop

What this does is level out the measurement for different networks that can claim similar foot traffic but, because of the other variables, may actually be delivering very different ad impression/eyeball numbers.

Working this out

For example, let’s use the example of two clinics that both claim to run 5,000 people through their doors each month.

Each clinic is a “venue” in DPAA terms and the raw number of people coming into the venue is labeled venue traffic. The actual screen or screens are media vehicles.

In both cases, 80 per cent of the people end up parking their butts in the area where the “media vehicles” are running (this area, in OVAB’s terminology is the “vehicle zone”).

So based on that 80 per cent, the potential audience is reduced by 20 per cent to 4,000. That’s the number, says the DPAA, is the “vehicle traffic.”

For those 4,000 people, in both cases, surveys or other technologies sort out that 75 per cent, on average, actually notice the screen. So that reduces the number to 3,000, which OVAB calls the “vehicle audience.”

Now here’s where things start to diverge.

In clinic A, the average dwell time is 10 minutes (600 seconds) and the playlist loop time matches that. So of those 3,000 people who noticed the screen, all of them had the opportunity to see all of the ads and other content in the playlist loop. The means the final number, the “average unit gross impressions,” is 3,000.

In clinic B, the average dwell time is 10 minutes (600 seconds) but the playlist loop time is 30 minutes. So the playlist is three times longer than the dwell time available for people to be exposed to all the ads in the rotation. The result is that the audience only had an average 33 per cent exposure opportunity, which reduces the overall 3,000 number to just 1,000 “average unit gross impressions.”

So two medical clinic networks that may be selling their ad inventory on the basis of pure foot traffic have, using these guidelines, very different actual audiences when these calculations are used.

| Network example | Clinic A | Clinic B |

| Venue Traffic | 5,000 | 5,000 |

| % in the vehicle zone (near screen) | 80% | 80% |

| Resulting vehicle traffic | 4,000 | 4,000 |

| % who notice the screens (vehicle) | 75% | 75% |

| Resulting vehicle (screens) audience | 3,000 | 3,000 |

| Dwell time near screens | 600 seconds | 600 seconds |

| Content loop time | 600 seconds | 1,800 seconds |

| Match of dwell and loop times | 100% | 33% |

| Average unit gross impressions | 3,000 | 1,000 |

Another example: A network in a train station may have 100,000 people a day near the screens, but if only 10 per cent look and the dwell time is only 10 seconds and the loop is 60 seconds, the actual gross impressions per day is more like 1,600.

The Eureka moment

It was doing this calculation, a fairly close variation on one that has been used in my own industry circle of friends for years, that finally gave me the Eureka moment. The words are tough to grasp but the calculation makes it pretty simple.

It allowed me to go back to this tortured statement:

Average Unit Audience should be the currency metric for out-of-home video networks. Average Unit Audience is defined as the number and type of people exposed to the media vehicle with an opportunity to see a unit of time equal to the typical advertising unit.

And turn it into this:

Average Unit Audience should be the standard measurement used for digital screen networks. That measure provides a common way to define how many people had an opportunity to see the ads on screens installed in network venues, and how many of those people were around those screens long enough to see all the ads that were scheduled to run. Ideally, the average amount of dwell time around the screens is equal to the length of the ad loop. If not, the average unit audience may be larger or smaller depending on that dwell time and loop length.

The guidelines go much, much deeper than this, and talk about variables such as networks with a content/ad mix, things like reach and frequency and the longer view approach that goes beyond audience measurement, into performance measurement and attitudes towards networks. There’s lots of good stuff in there, and if you have the time and the inclination, do drill down.

If not, and you just need to have a base understanding of what all the fuss was about, hopefully this cleared the fog a little for you.

[…] Sixteen:Nine Related Posts:Digital OOH’s top networks driving half-billion ad exposures a monthGood content starts with good planningenVu’s Arbitron research suggests high audience notice, engagement and recall ratesThe First Audience Metrics Guidelines for Digital Out-of-Home To Be Unveiled on October 29The Pandora’s Box of Pay Per Look in Digital OOH Advertising Share and Enjoy: 0 […]